Make self-service possible for Ready Assess

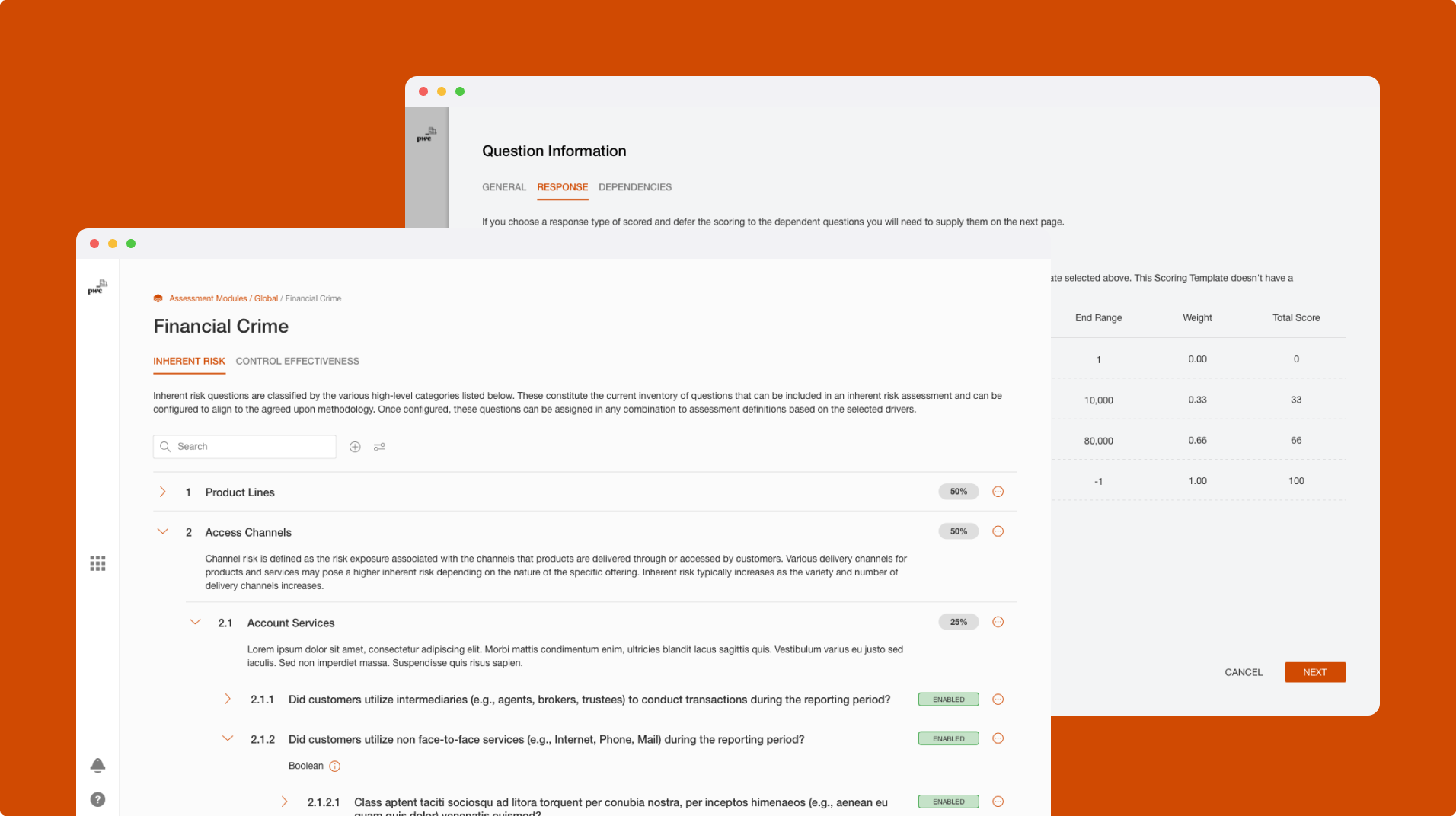

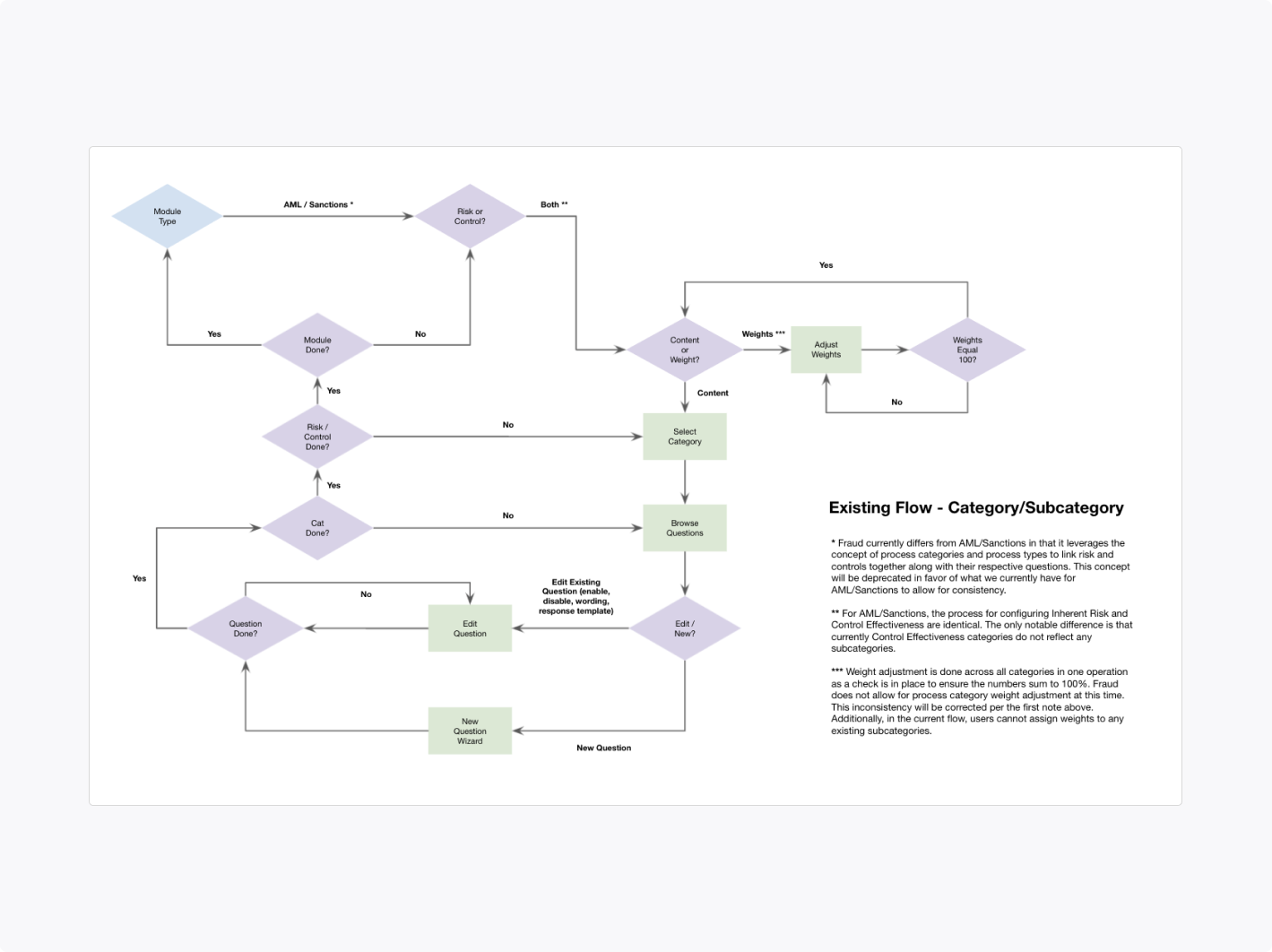

PwC had previously launched Ready Assess as an internal tool for risk consultants to manage on behalf of clients. Leadership now wanted to transition into a self-service product for compliance teams at financial institutions. I was tasked with building the authoring experience and audit trail to help financial institutions run assessments.

Timeline

8 months

Team

Managing Director, Product Owner, 1 PM, 3 engineers

Role

Lead Designer

Methods

SME Interviews, User Journey Map, User Flow, Competitive Analysis, Visual Design, Prototyping

-

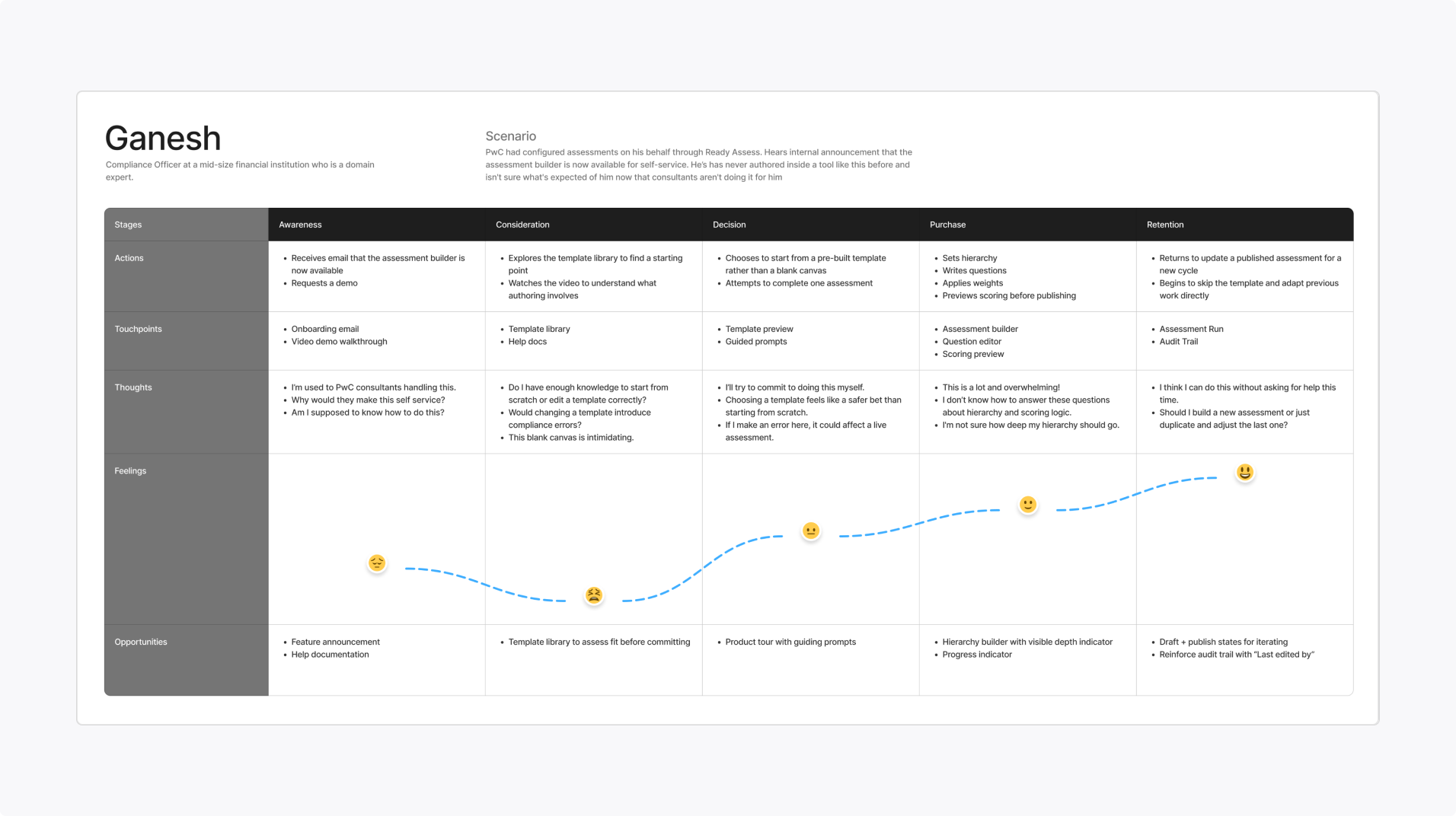

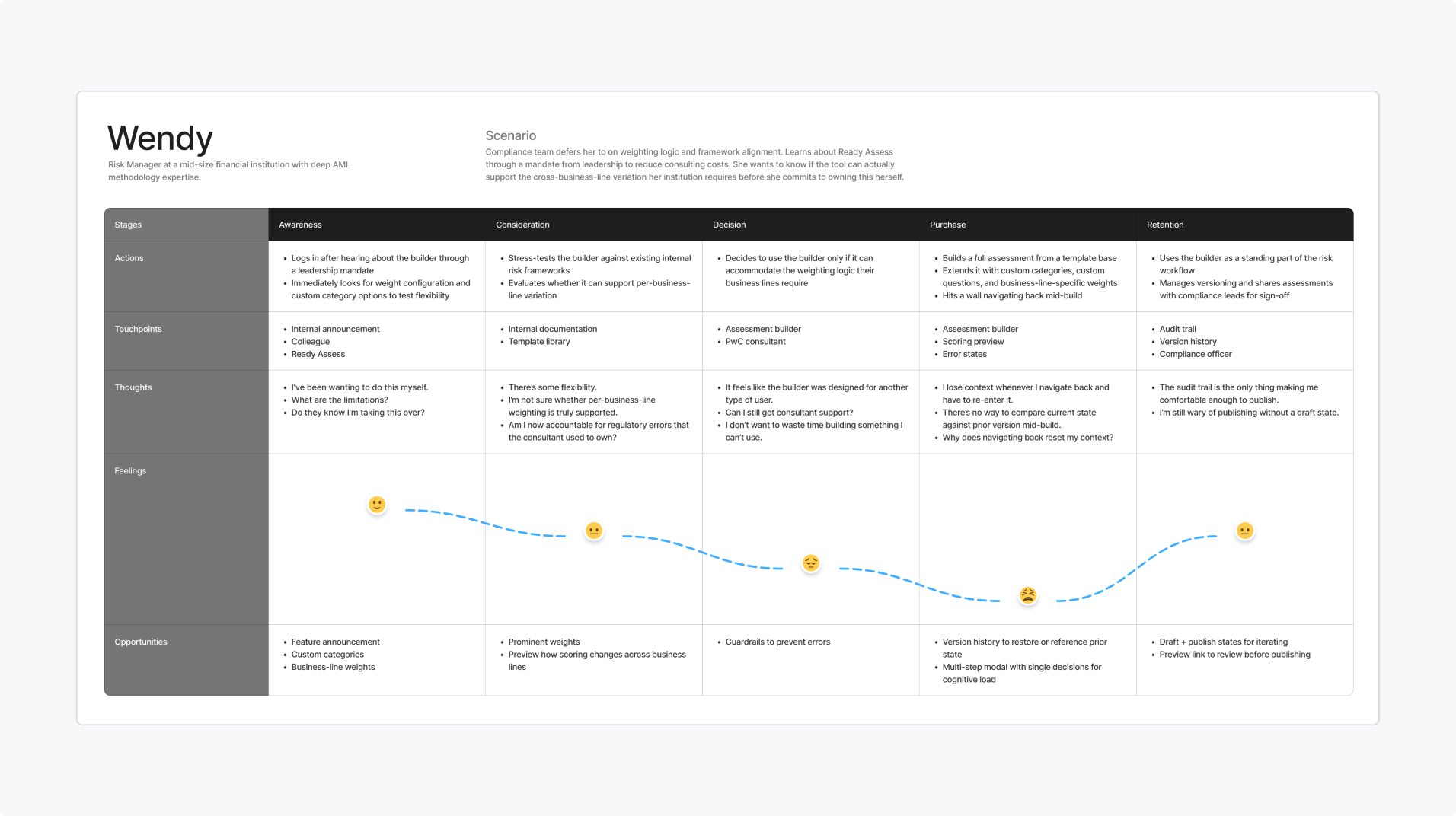

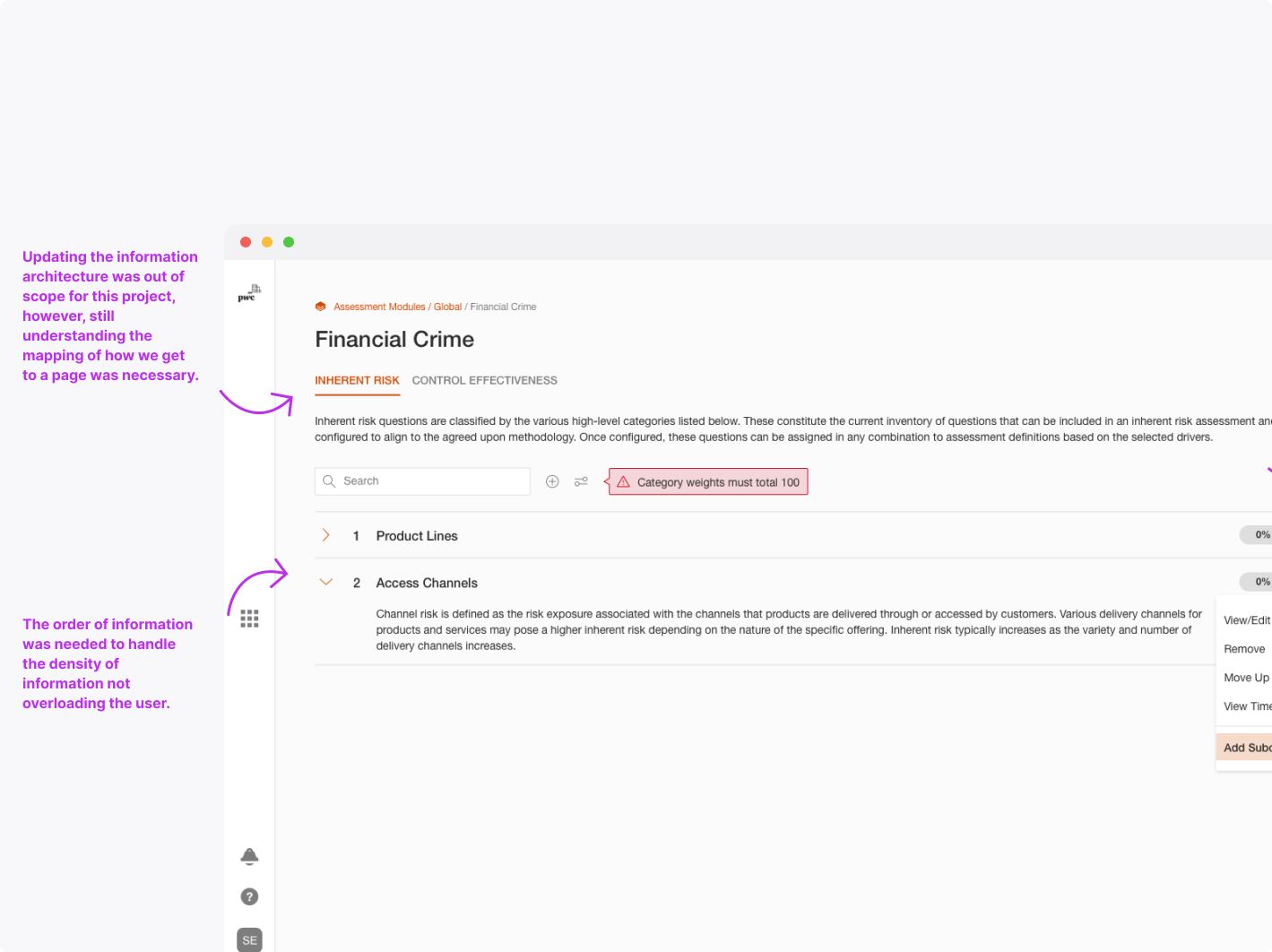

Frustrating to have to go back and forth to recall the current instance

Not able to see the exact hierarchy of the sum of all parts

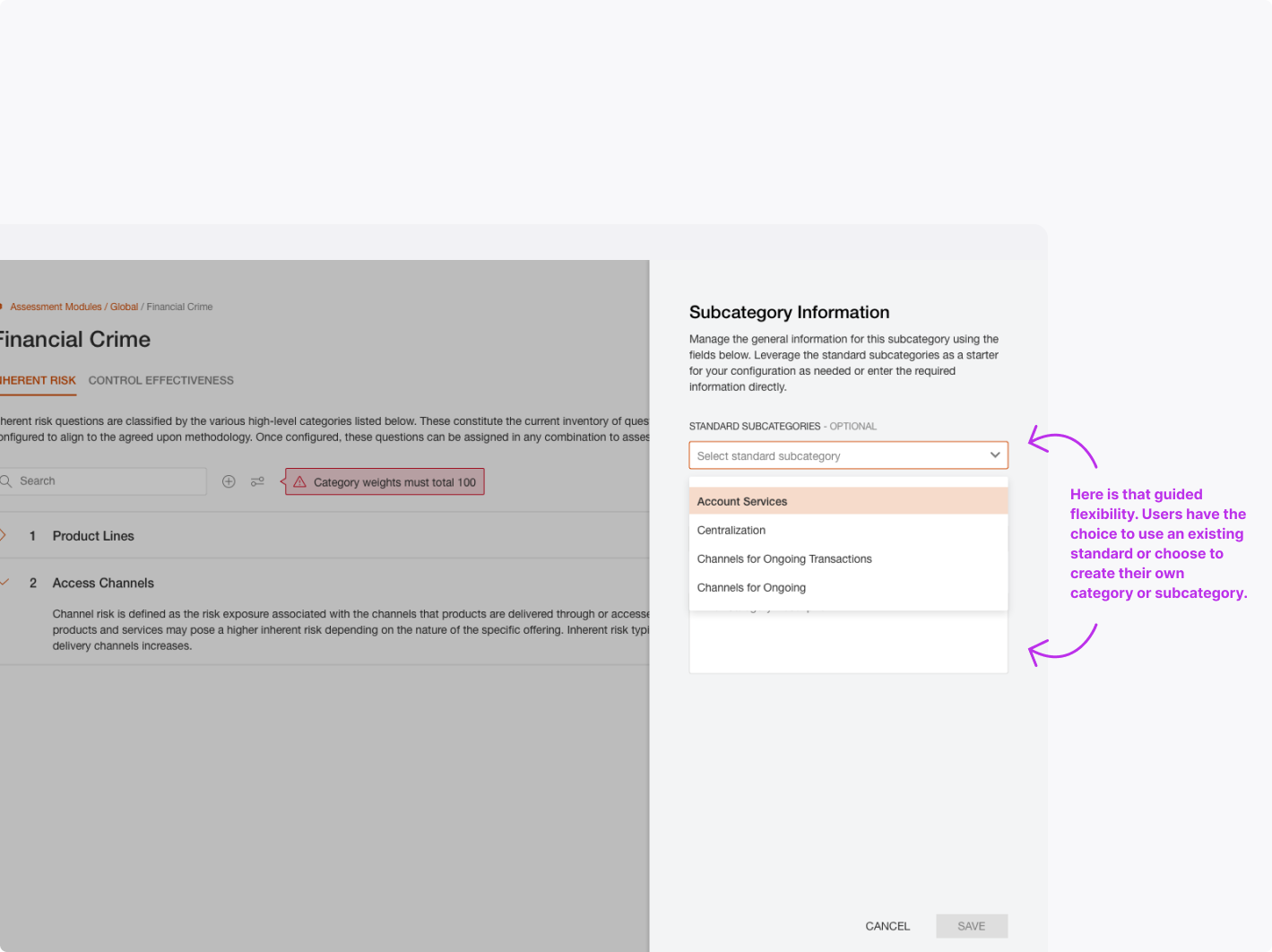

Unable to create unique categories and subcategories vs selecting standard

Unsure of whether to apply weights at the global or individual level

-

Business: Ensure that the underlying risk logic meets regulatory standards

User: How to organize risk categories, write questions, and apply scoring

Defining the problem: How might we help compliance teams build and run consistent risk assessments, without sacrificing the flexibility different business lines need?

-

Help users start without expertise

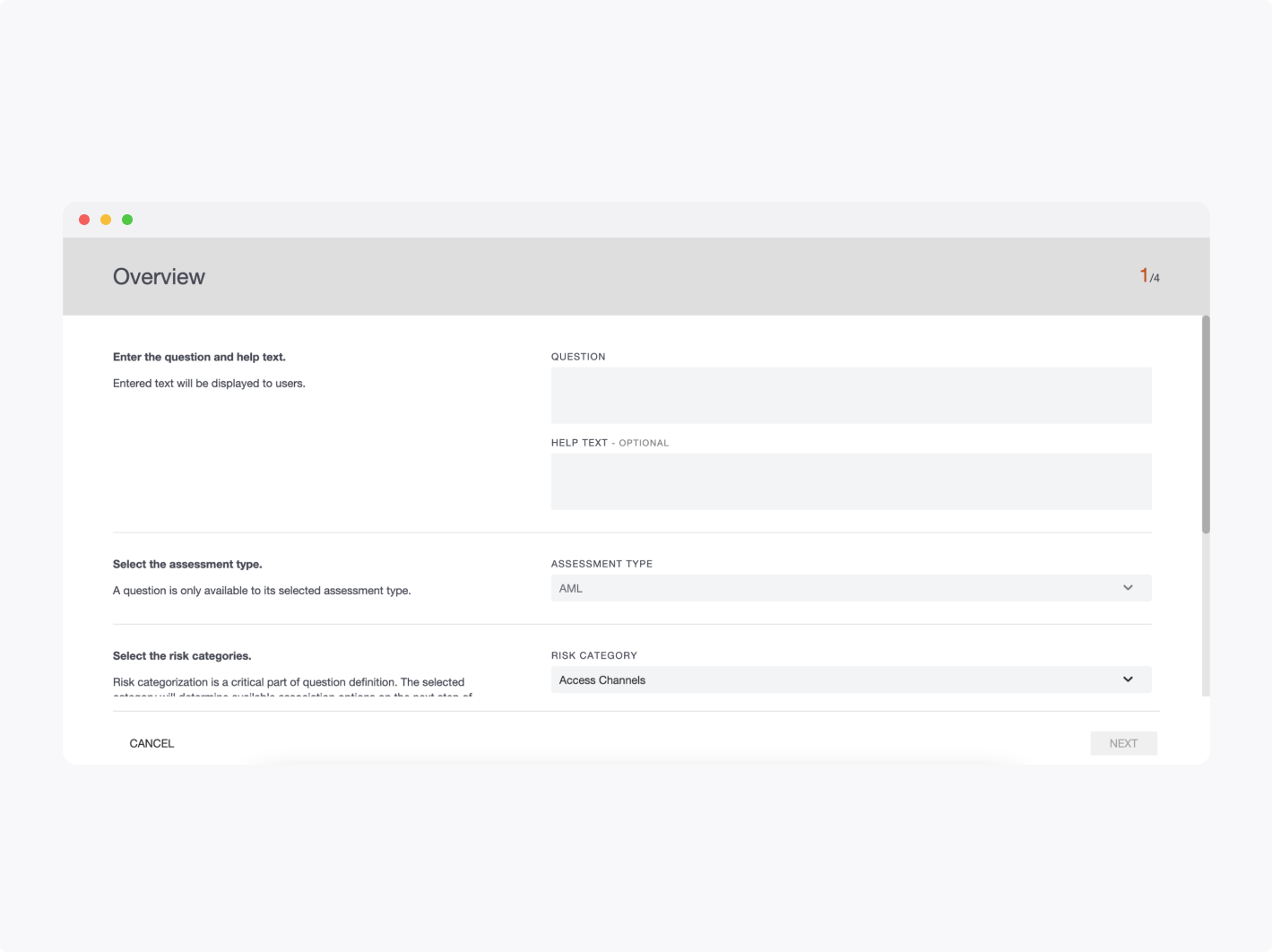

Users shouldn't need deep AML methodology knowledge just to begin building an assessmentMake scoring logic visible while authoring

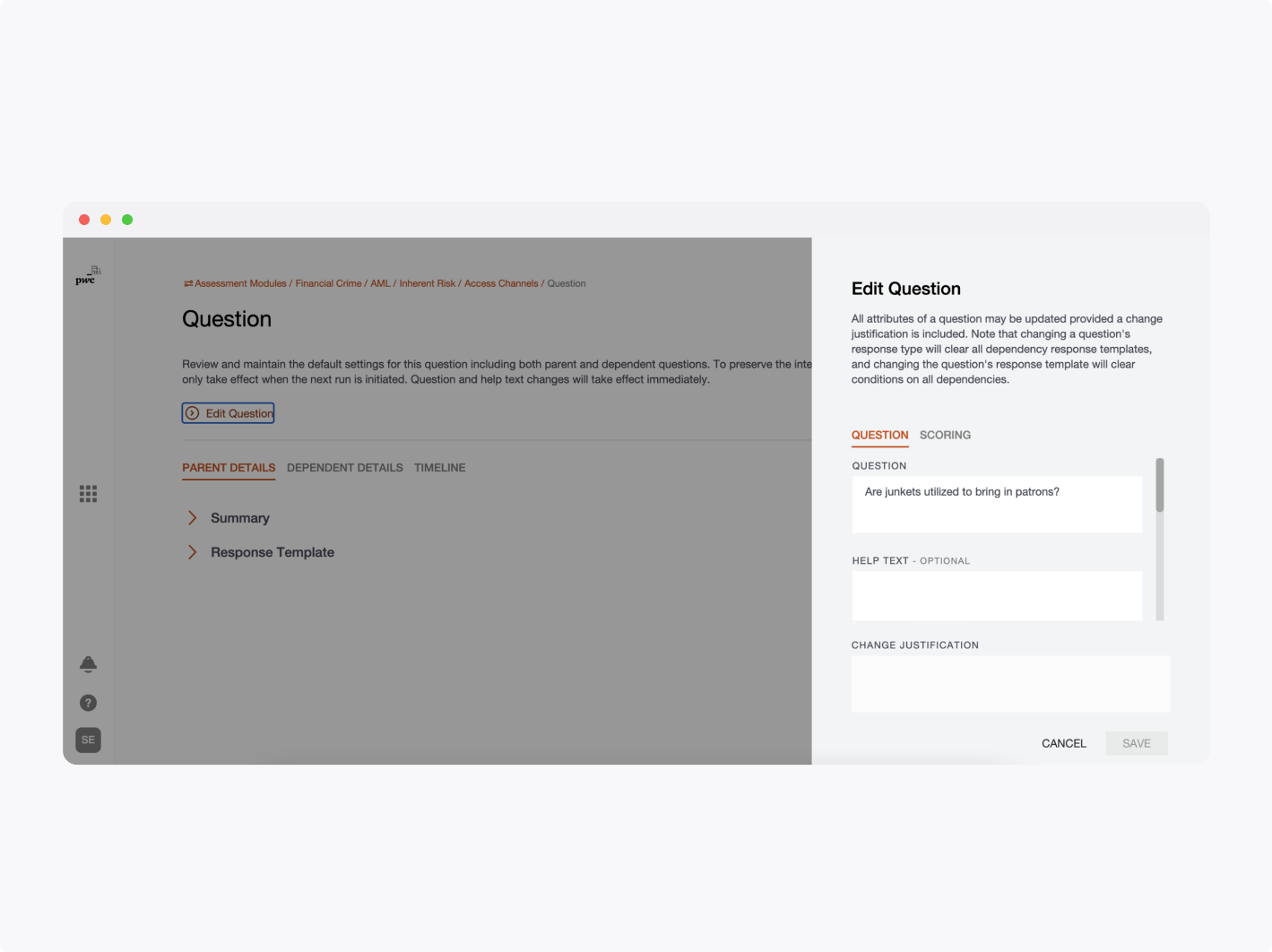

Authors need to understand how their question and weighting choices affect the final risk score before they publish

Allow customization without breaking standardization

Different business lines need flexibility, but not at the cost of comparability across the organization

-

A pre-built template library that users can start from, adapt, or use as is

Real-time scoring preview that updates as questions are added, weighted, or reordered

Users can extend the assessment with their own questions within guardrails

Further Context

Competitive analysis of potential solutions

Early Ideations

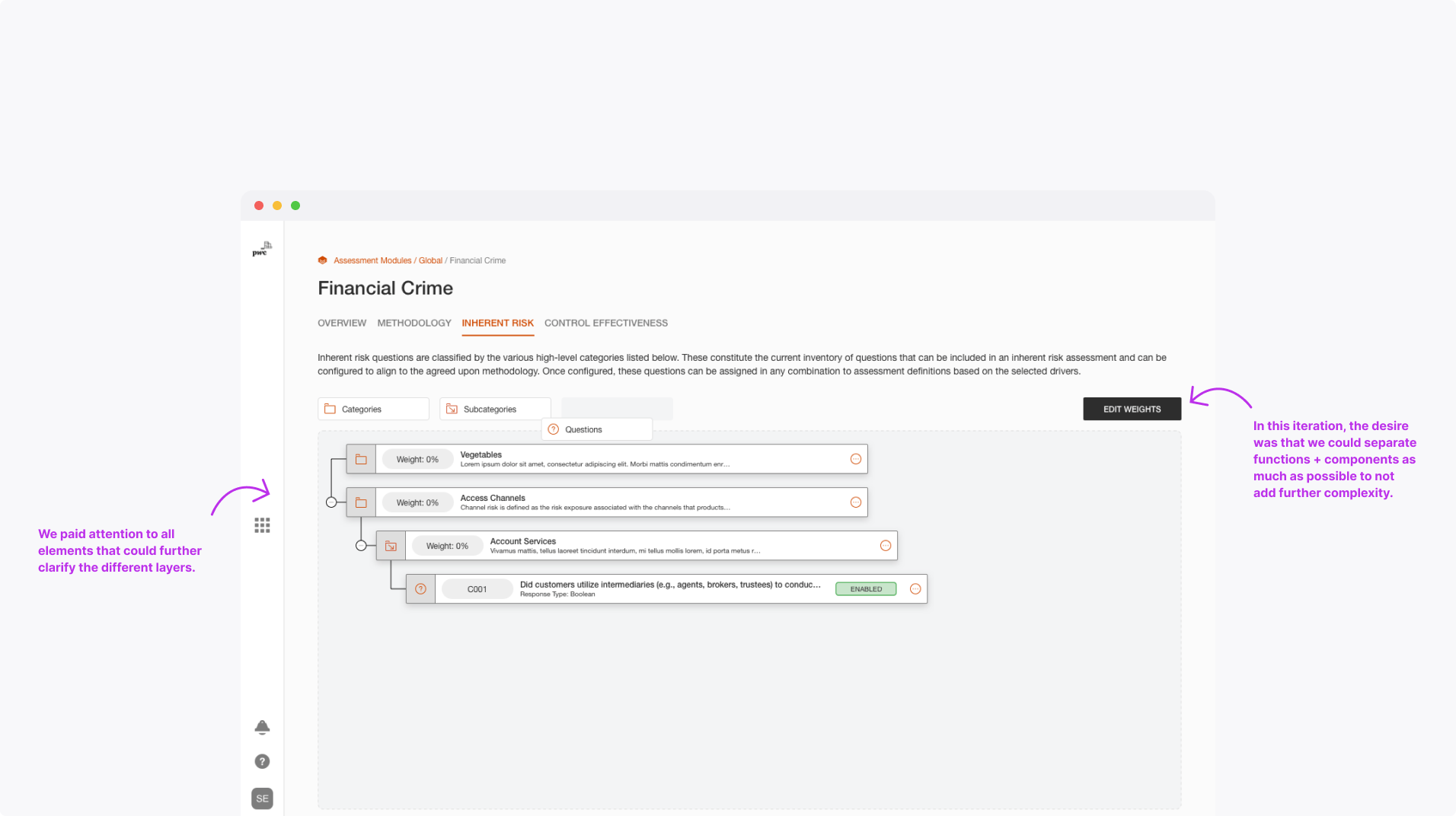

Drag + Drop

Our managing director liked the idea of providing users with a high level of interaction while building their assessments. They believed the action of drag and dropping these tree nodes would enable users to feel that full control in creating their hierarchies.

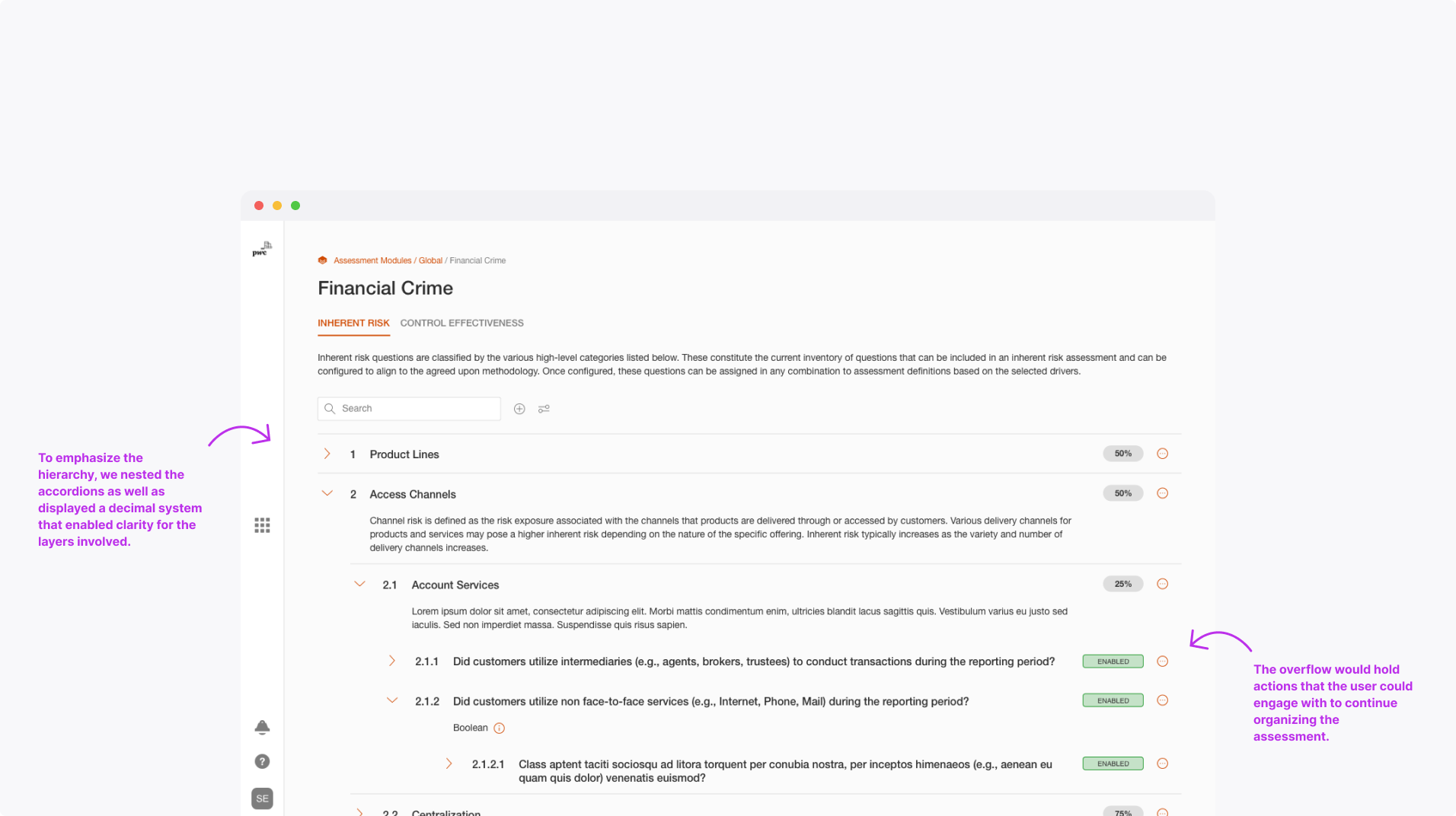

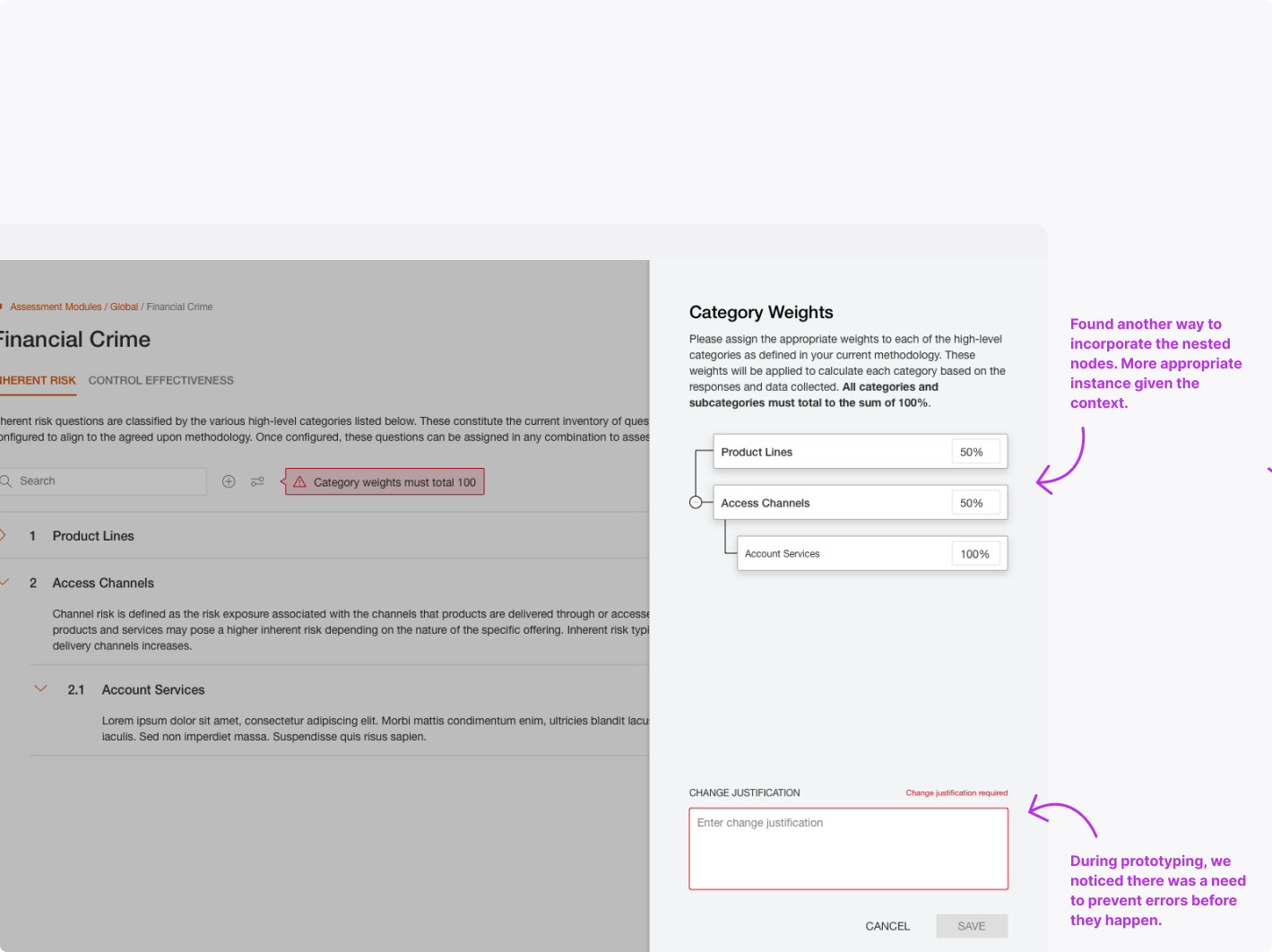

Nested

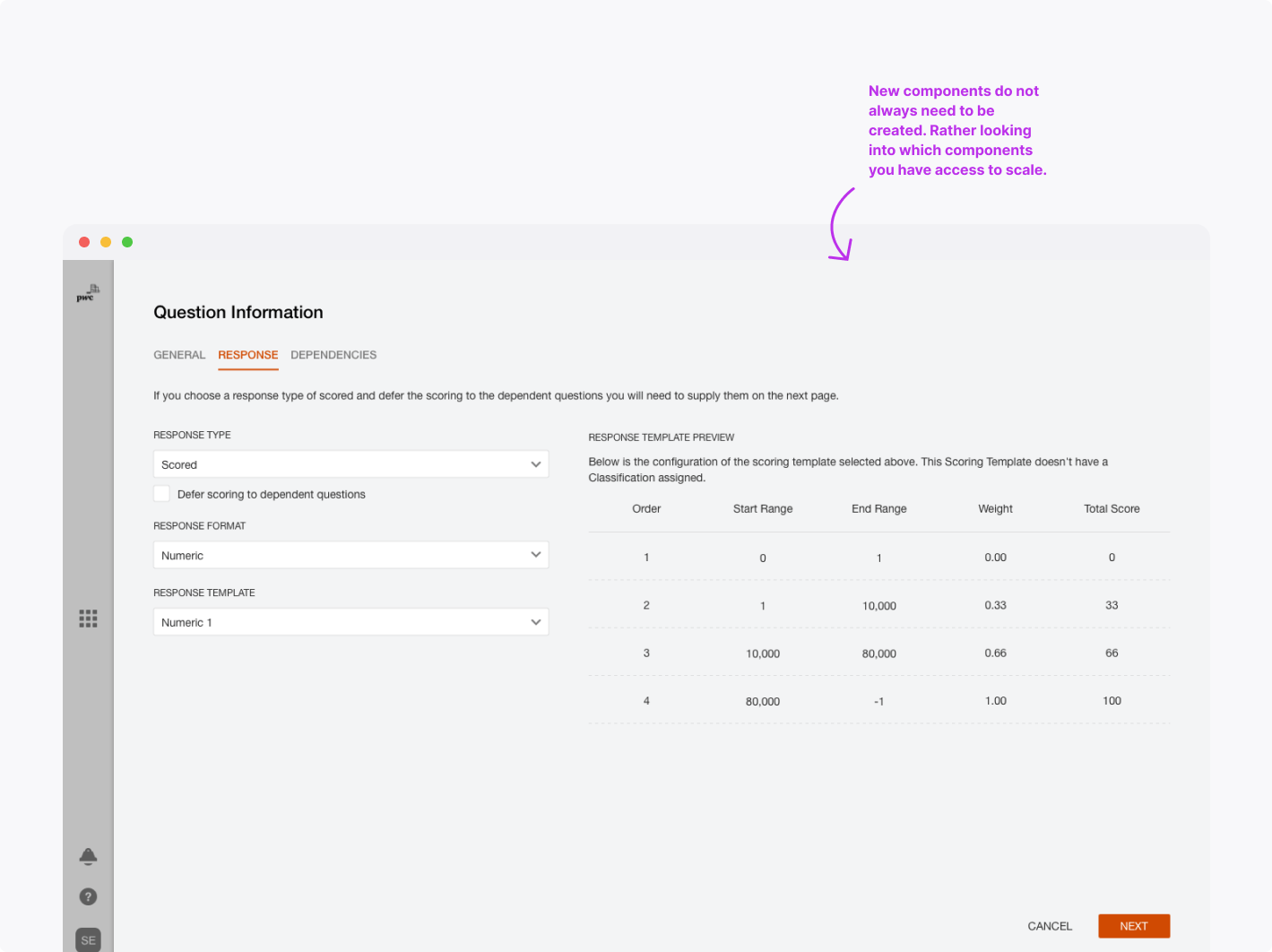

Accordions were components we already used within our AppKit. They were not being used in the same manner, but extending their capabilities would be less of an engineering lift. That and still provided the hierarchy we liked from the tree nodes.

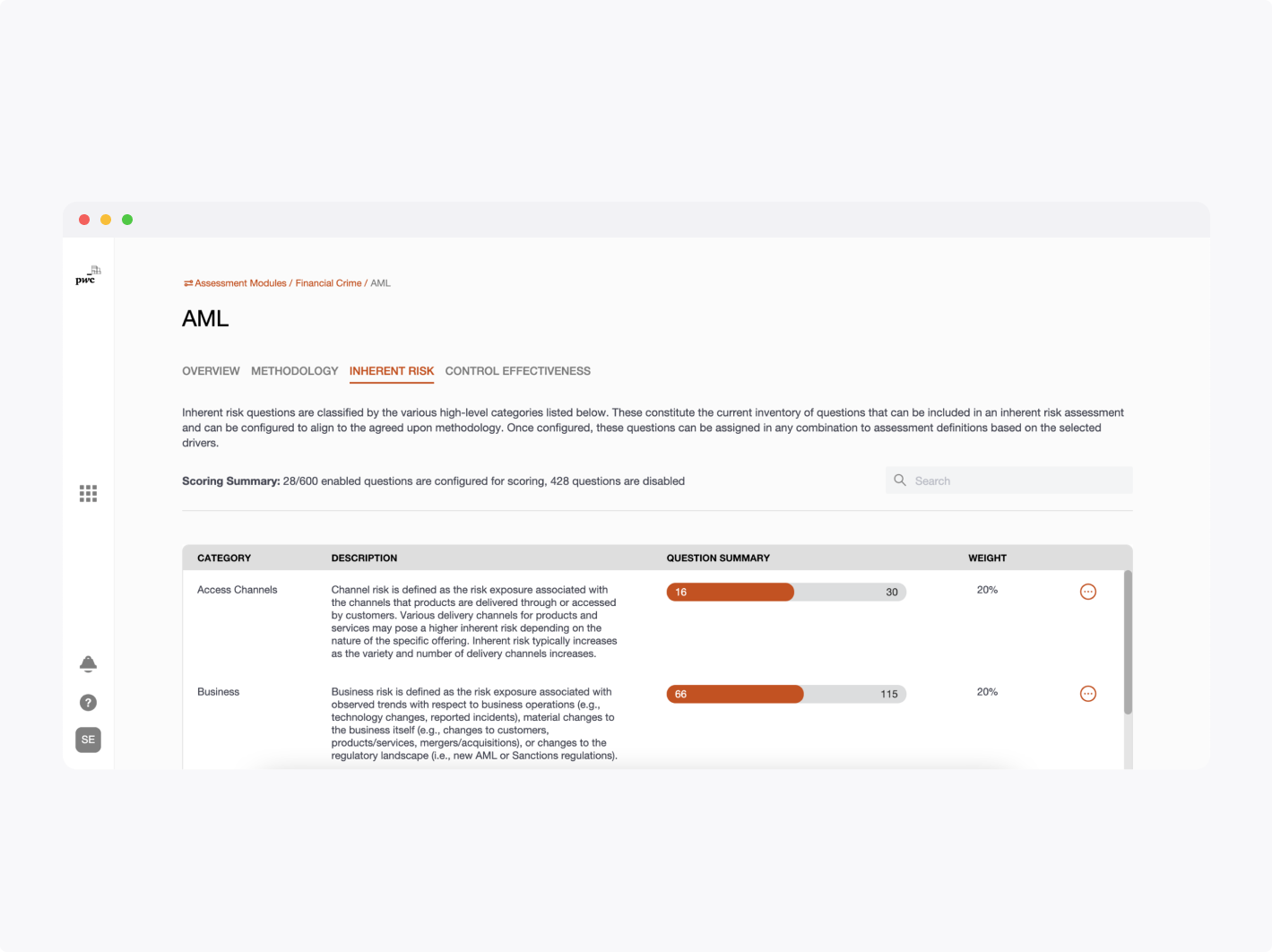

Final Designs

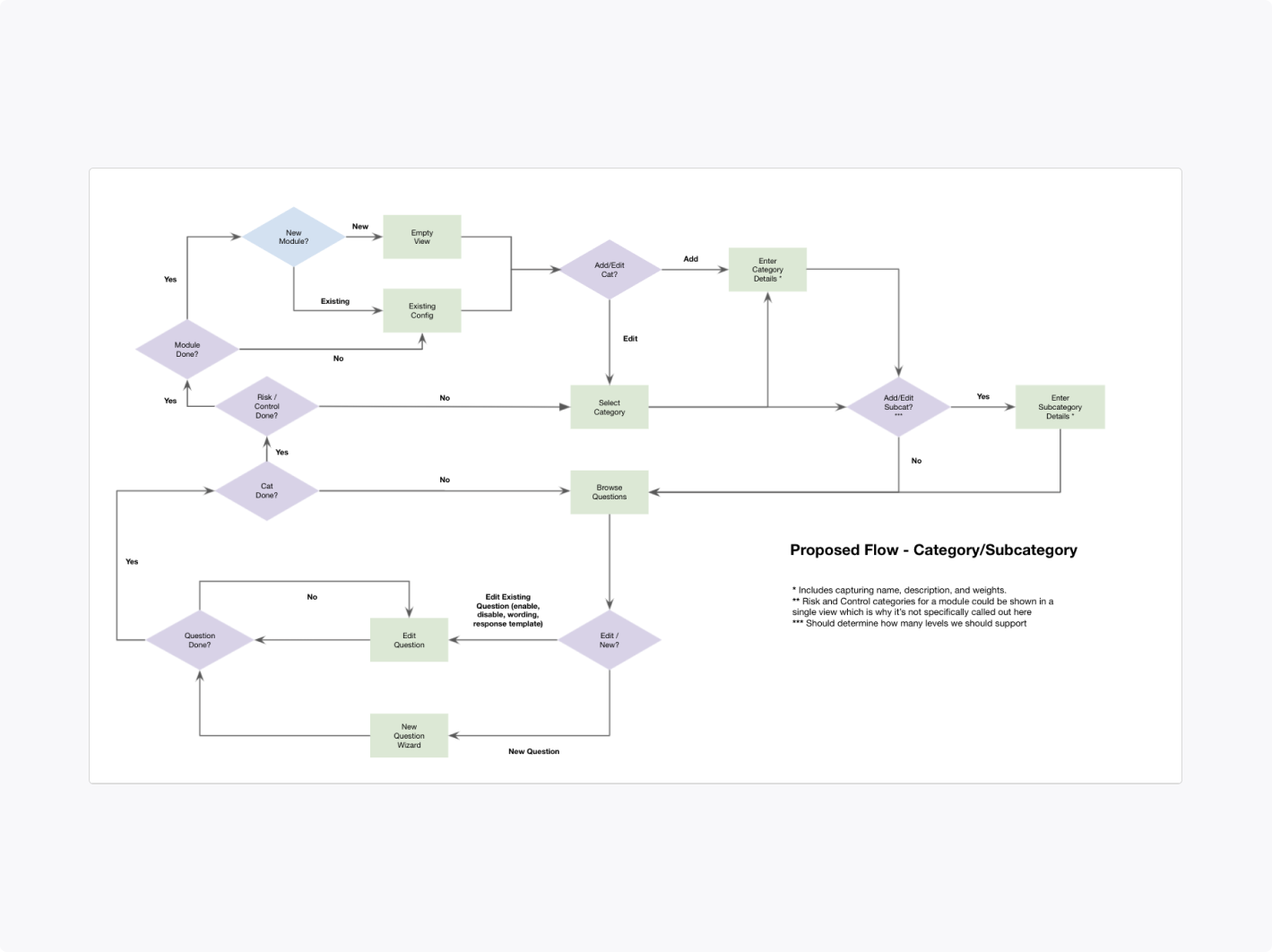

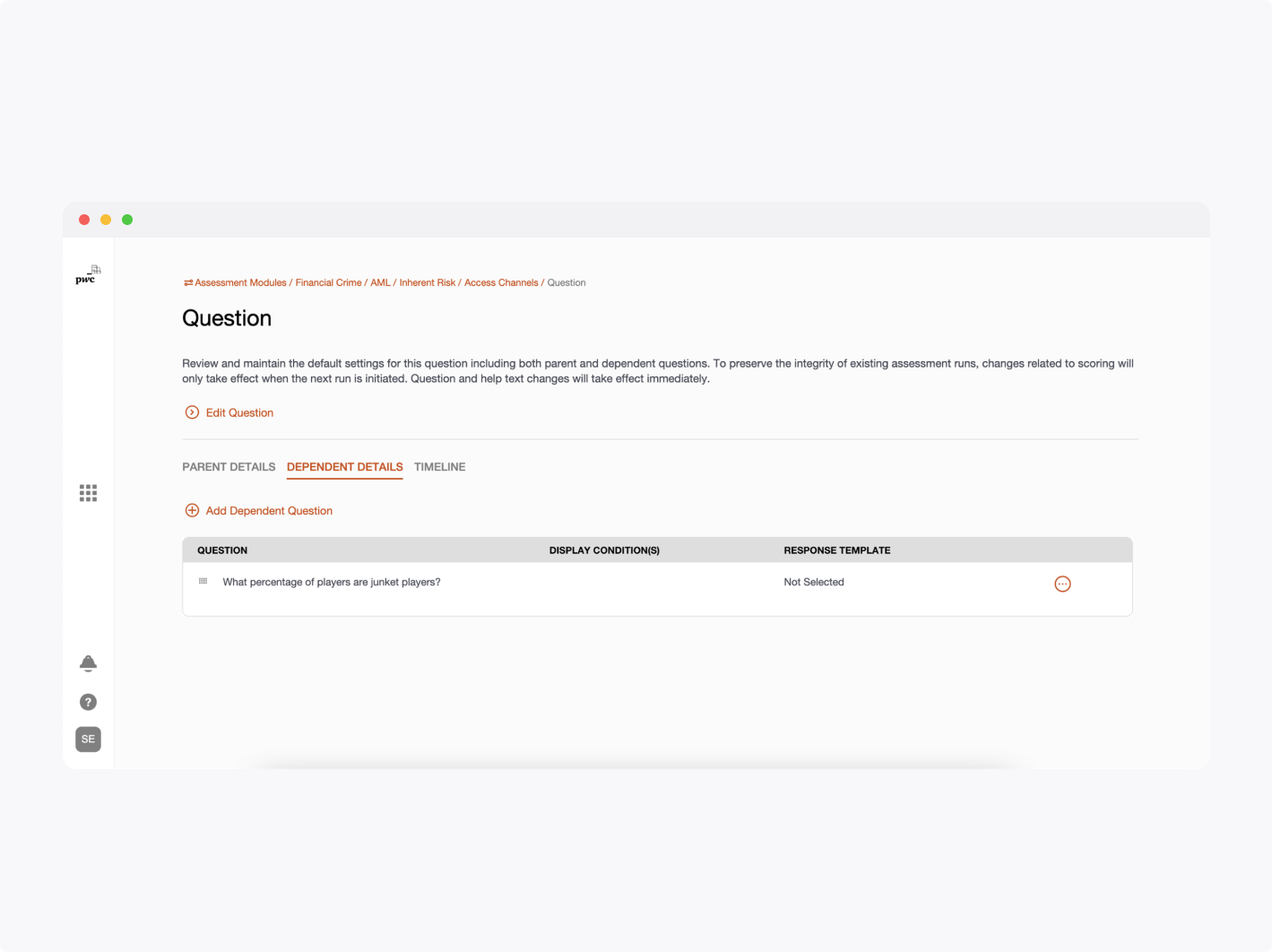

After compiling product requirements, finalizing the user flow, and locking down the structure, I focused on the authoring experience where users could add or edit information. This involved prototyping the interactions to solve for the feedback provided, error prevention, and focused attention throughout the process.

Results

Measure task success rate to track how often users complete or abandon

Use custom event tracking to review time to complete tasks

View CES scores to see how users feel the level of effort

Create cohorts to view customer churn rate